A child reaches for the same spinning toy again, then ignores it tomorrow. A teen says they “don’t care” about rewards, but lights up when they get to control the playlist. ABA preference assessments exist for this exact problem: preferences shift, and our guesses are often wrong. So how do you identify what someone actually wants to work for—quickly, ethically, and in a way that holds up on the BCBA exam?

In this guide, you’ll learn how ABA preference assessments work, which formats to choose, common pitfalls I’ve seen in real cases, and how to translate results into stronger teaching programs and better exam performance.

What Are ABA Preference Assessments?

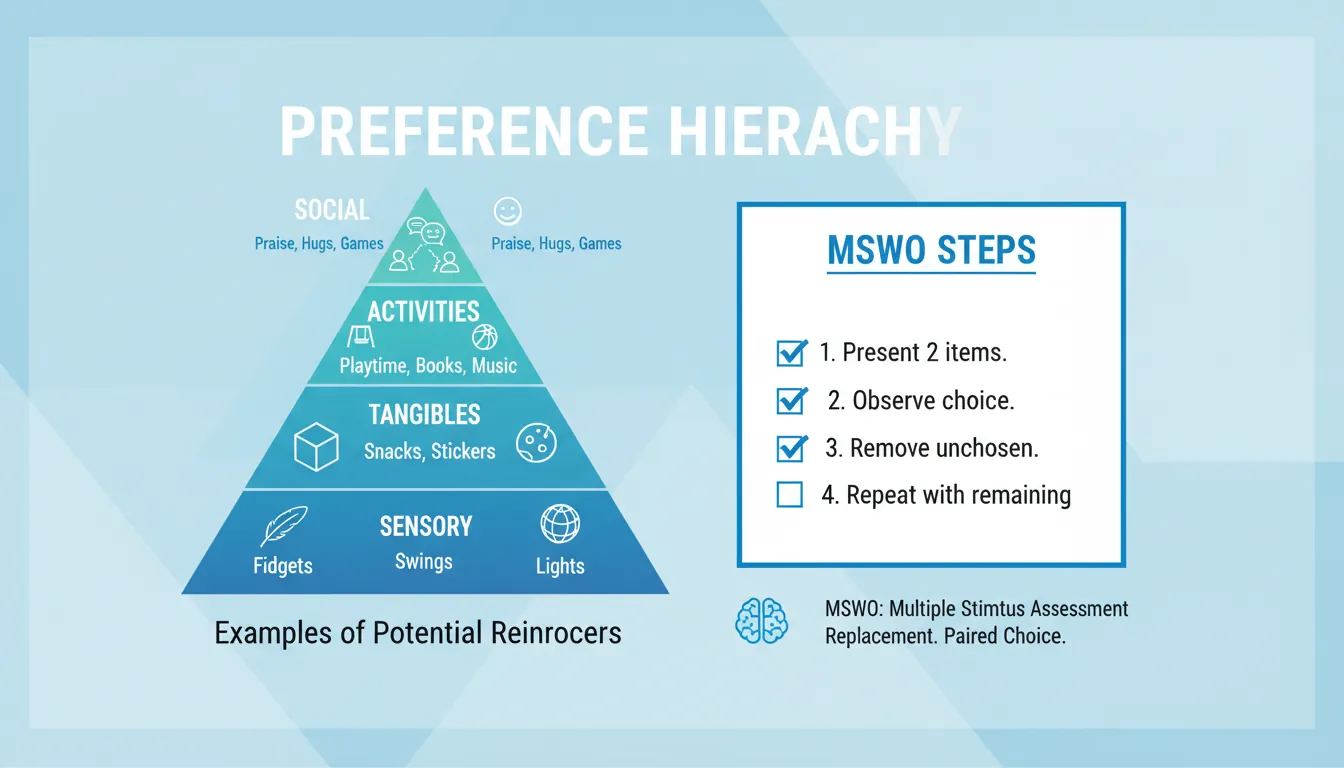

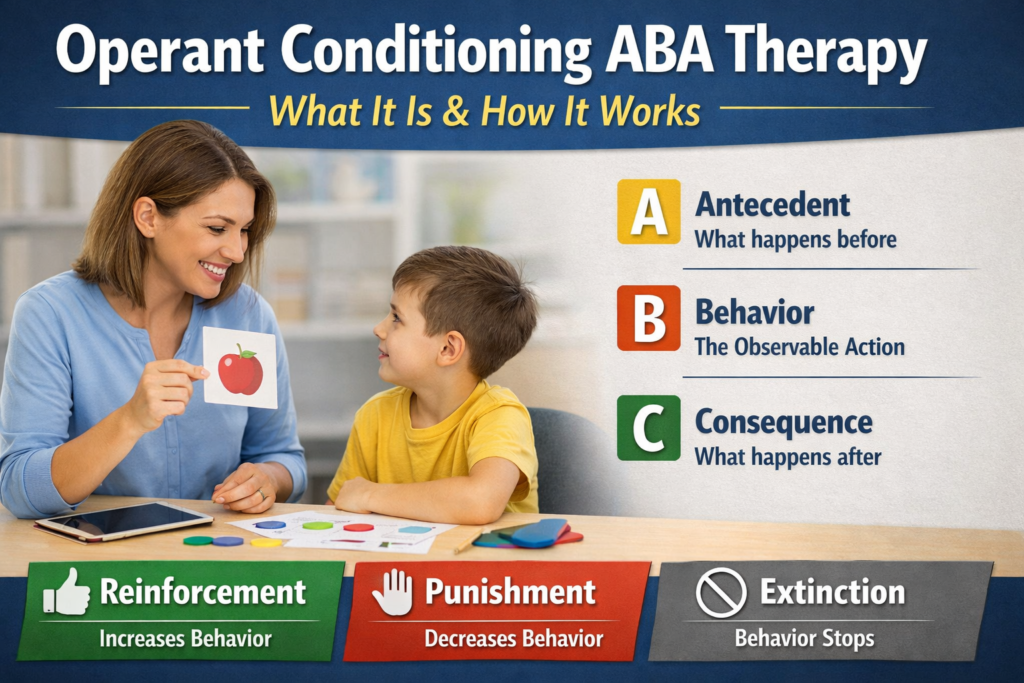

ABA preference assessments are systematic methods for identifying and ranking stimuli (items, activities, social interactions, sensory experiences) a learner is likely to choose. The output is typically a preference hierarchy—high, medium, and low preference options you can test and use in intervention.

Preference is not the same thing as reinforcement. In practice, I’ve had “high-preference” snacks fail to increase responding because the learner wasn’t hungry (motivating operations mattered), while a “medium-preference” break reliably strengthened behavior because it matched the function and timing of the task.

Key takeaways:

- Preference assessments identify potential reinforcers.

- Reinforcer assessments confirm what actually increases behavior.

- Preferences change—so reassess on a schedule, not just at intake.

For BCBA study alignment and exam-style framing, see our guide on preference assessments aba bcba exam guide.

Why ABA Preference Assessments Matter (Clinical + Exam)

In day-to-day ABA, poor reinforcement is one of the fastest ways to create stalled learning, escape-maintained problem behavior, and staff burnout. On the BCBA exam, ABA preference assessments show up in scenarios where you must choose the best assessment type, interpret a hierarchy, or troubleshoot weak reinforcement.

They support:

- Faster skill acquisition (stronger, more consistent reinforcers)

- Better session cooperation (less coercion, more choice)

- More accurate programming (matching reinforcers to context)

- Stronger ethical practice (individualized, assent-informed options)

They also connect to functional assessment: if you know why behavior occurs, you can select reinforcers that compete with that function. (For example, attention vs escape vs tangibles.) A helpful refresher is functional behavior assessment (FBA).

Quick Decision Guide: Which Preference Assessment Should You Use?

No single format wins every time. The best choice depends on time, learner skills, and how precise you need the hierarchy to be.

Use this mental shortcut:

- Need fast? Start with brief observation + MSWO.

- Need precise ranking? Use paired-stimulus.

- Learner can’t scan arrays well? Try single-stimulus.

- You’re updating a known set? Use MSWO or free-operant.

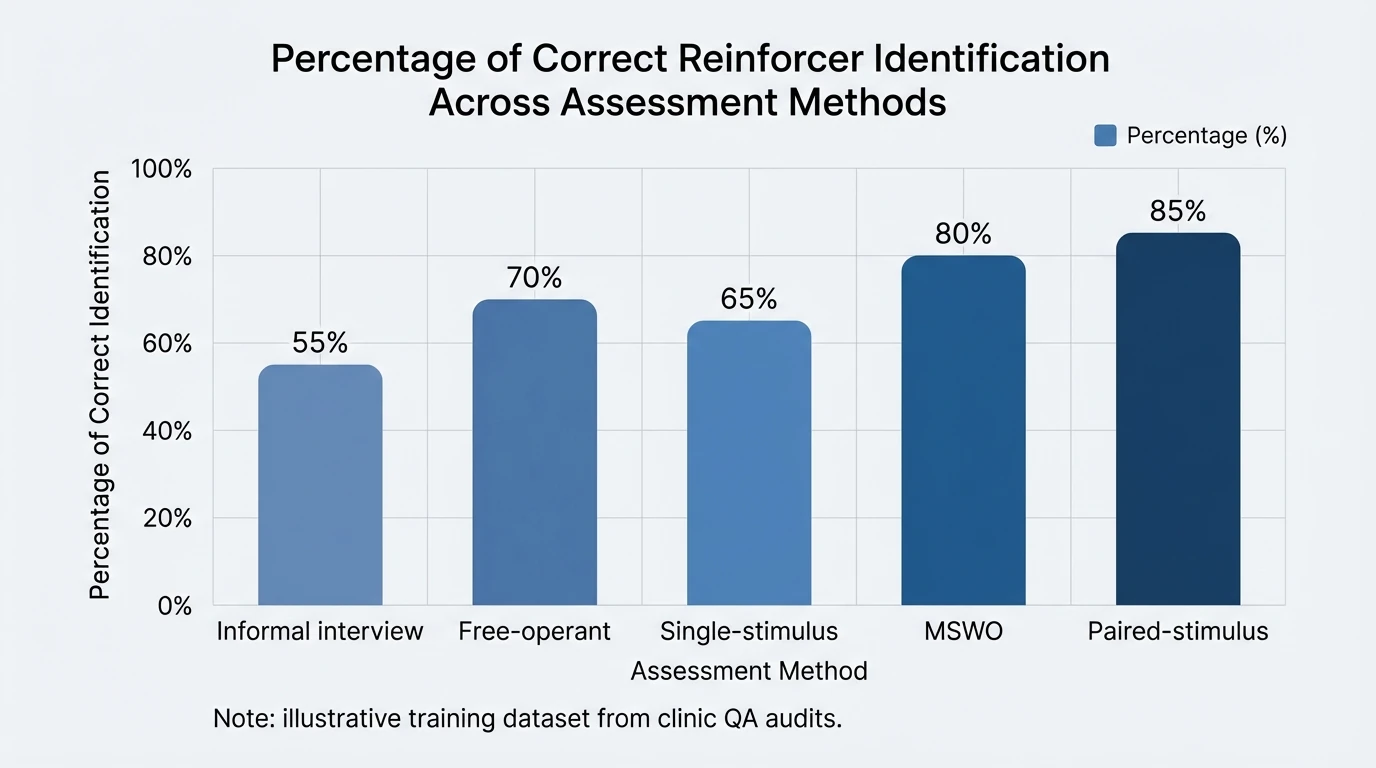

| Method | Best for | Pros | Cons | Time required | Typical output |

|---|---|---|---|---|---|

| Free-operant | Learners who can move around and engage independently; naturalistic settings | Low demands; minimal prompting; good ecological validity; can sample many items at once | May miss low-salience items; influenced by proximity/novelty; requires clear observation of engagement | Low–Moderate (e.g., 5–15 min) | Engagement-based preference; often a ranked hierarchy (by duration/frequency) |

| Single-stimulus | Learners with limited scanning/choice skills; initial screening | Very simple; useful with severe problem behavior/avoidance; can identify tolerated vs avoided items | May overestimate preference; no direct comparison among items; repeated presentations may affect responding | Low–Moderate | Approach / no approach (or accept/reject) per item; not a strong rank |

| Paired-stimulus | Building a robust hierarchy; when choices must be clear and comparable | Produces stable, differentiated rankings; reduces false positives vs single-stimulus | Time-intensive; requires discrimination between two options; possible position bias; more trials | High | Ranked hierarchy (percent selections across pairs) |

| MSW/MSWO | Efficient ranking when learner can choose among multiple items | Faster than paired-stimulus; yields hierarchy; MSWO reduces repeated access effects | Requires scanning multiple items; potential side/proximity bias; MSW may allow multiple selections before removal | Moderate | Ranked hierarchy (order of selection across arrays) |

The Main Types of ABA Preference Assessments (With Practical Notes)

1) Informal Methods (Interview + Naturalistic Observation)

Interviews (“ask”) and observation are often your first pass, especially with verbal learners. They’re also excellent for discovering idiosyncratic reinforcers (e.g., being the line leader, carrying the clipboard, choosing the order of tasks).

Limits are real, though:

- People may report what they think they should like.

- Caregivers may confuse “plays with” vs “will work for.”

- Context can distort results (fatigue, hunger, novelty).

When I’m training RBTs, I treat informal methods as hypothesis generation—not the final answer.

2) Free-Operant (No Removal)

In a free-operant assessment, you provide access to many items and measure engagement (duration, frequency of contact). This is low-pressure and works well when removing items could evoke problem behavior.

Best uses:

- Learners who struggle with transitions

- Early sessions to build rapport and assent

- Environments where choice should stay continuous

3) Single-Stimulus (SS)

You present one item at a time and record approach/engagement. This format is simpler, but it can overestimate preference because there’s no competing choice. It’s often appropriate when scanning an array is hard or when you’re working with very limited repertoires.

4) Paired-Stimulus (PS) / Forced-Choice

Two items are presented; the learner selects one. This usually produces the clearest hierarchy, but it takes longer because trials increase quickly as items increase.

Clinical tip I’ve learned the hard way: control for position bias by counterbalancing left/right placement, or you may “discover” a preference for the left side of the table.

5) Multiple Stimulus Without Replacement (MSWO)

MSWO is widely used because it’s efficient and still yields a ranked list. Present an array, let the learner pick one, remove it, then repeat with remaining items.

Strengths:

- Efficient for clinics and schools

- Good balance of speed + ranking quality

- Easy for teams to implement with fidelity

Common error: re-presenting the chosen item too soon “because it keeps them happy,” which undermines the without-replacement logic and distorts the hierarchy.

Step-by-Step: How to Run an MSWO (Clean, Ethical, Exam-Ready)

This is the format I most often see in BCBA exam vignettes because it’s practical and data-friendly.

- Select stimuli (5–7 is common) based on observation/interview.

- Ensure motivation (e.g., don’t run edible trials right after lunch).

- Standardize instructions (simple, consistent SD like “Pick one.”).

- Present array with clear spacing; randomize positions across trials.

- Allow selection; deliver brief access (e.g., 20–30 seconds).

- Remove chosen item from the array (without replacement).

- Repeat until all items are selected or learner stops selecting.

- Score selection order and convert to ranks.

Data you want:

- Selection order per session

- Non-selections/latency (important if motivation is low)

- Notes on problem behavior or avoidance (context matters)

Turning Preference into Reinforcement: Don’t Skip This Step

A preference hierarchy is a starting point. To confirm reinforcement, you need to see whether the stimulus increases future responding under similar conditions.

Practical ways to verify:

- Brief reinforcer assessment embedded into teaching (AB comparison)

- Progressive ratio “work requirement” probes for high-stakes programs

- Monitoring skill acquisition rate when reinforcers change

If behavior is severe or the function is unclear, reinforcement decisions should sit alongside functional assessment logic. When you’re sorting assessment options on the exam, it helps to understand how approaches differ—see functional analysis vs descriptive assessment bcba.

Common Mistakes in ABA Preference Assessments (and How to Fix Them)

-

Mistake: confusing preference with reinforcer effectiveness

Fix: do quick performance checks—does responding increase? -

Mistake: running assessments when MOs are weak

Fix: schedule smart (before snack, after break, not after satiation). -

Mistake: too many items, too little control

Fix: start with 5–7 stimuli and rotate sets. -

Mistake: not updating the hierarchy

Fix: reassess weekly/biweekly, or when responding drops. -

Mistake: staff drift and inconsistent procedures

Fix: use a checklist, BST, and periodic IOA/treatment integrity checks.

Ethical and Practical Considerations (Assent, Dignity, and Real Life)

Ethically, ABA preference assessments should support choice, reduce coercion, and respect the learner’s right to decline. In my own supervision, I’ve seen “compliance first” cultures accidentally turn preference assessments into power struggles—especially when items are removed quickly or prompts become pressure.

Better practice:

- Offer meaningful opt-out (“No thanks” honored, try later)

- Include social, activity, and autonomy-based options—not just edibles

- Avoid depriving basic needs (food/water) to “create motivation”

- Collaborate with caregivers on culturally appropriate stimuli

For evidence-based behavior intervention and assessment framing, the BACB provides foundational standards and expectations for professional conduct.

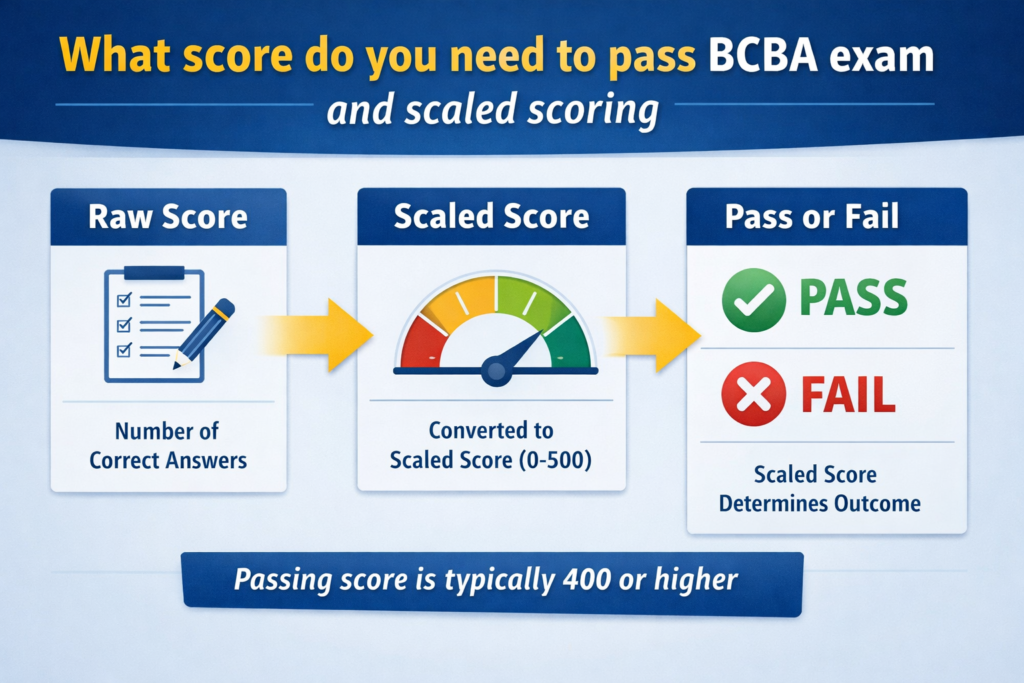

How This Shows Up on the BCBA Exam (What to Listen For)

On exam questions, clues often point to the best assessment:

- “Rank order” needed + time available → paired-stimulus or MSWO

- Learner can’t choose from arrays → single-stimulus

- Removal triggers problem behavior → free-operant

- Preferences changed recently → reassess before changing the whole plan

Study tip from building mock exams: many wrong answers are “technically related” but miss the key constraint (time, learner skill, or need for ranking vs screening). If you want realistic practice items that mirror that wording, explore the task-list aligned practice resources at BCBA Mock Exam.

Preference Assessments – trial-based, free operant, interview | RBT® and BCBA® Exam | Learn ABA

Conclusion: Make Preference Assessments a Habit, Not a One-Time Event

The learner in front of you changes—sometimes daily—so ABA preference assessments should be a routine habit, not a checkbox. When you run them cleanly, you get better teaching momentum, fewer power struggles, and data you can defend clinically and on the exam. And when you treat preference as dynamic (not a label), your reinforcement system stays humane and effective.

If you’re prepping for the BCBA exam, share in the comments which preference assessment format trips you up most (MSWO vs paired-stimulus is a common one). Then take your next step with targeted study supports—mock exams, flashcards, and explanations designed to mirror the real test.

FAQ: ABA Preference Assessments

1) What is the goal of ABA preference assessments?

To identify and rank stimuli a learner is likely to choose, creating a preference hierarchy that helps clinicians select potential reinforcers.

2) How often should you run ABA preference assessments?

Re-run them when performance drops, when new stimuli are introduced, and on a routine schedule (often weekly or biweekly) because preferences shift.

3) What’s the difference between a preference assessment and a reinforcer assessment?

Preference assessments rank what is chosen; reinforcer assessments confirm what actually increases the target behavior under defined conditions.

4) Which ABA preference assessment is fastest?

MSWO and brief free-operant formats are typically faster than paired-stimulus, which is more time-intensive.

5) When should you use paired-stimulus instead of MSWO?

Use paired-stimulus when you need a more precise rank order and have time for more trials, especially with closely competing items.

6) Can social attention be included in ABA preference assessments?

Yes. Social, activities, autonomy, and sensory options can be assessed—often improving dignity and generalization beyond tangible rewards.

7) What are common data measures for preference assessments?

Selection order (rank), approach/no approach, engagement duration, and latency to choose—plus notes about context and any problem behavior.